Claude Code computer automation refers to an AI system that can execute tasks directly on your computer, including coding, file management, and workflow automation. Unlike traditional tools, it performs multi-step actions autonomously, reducing manual effort and increasing productivity across development and business environments.

KumDi.com

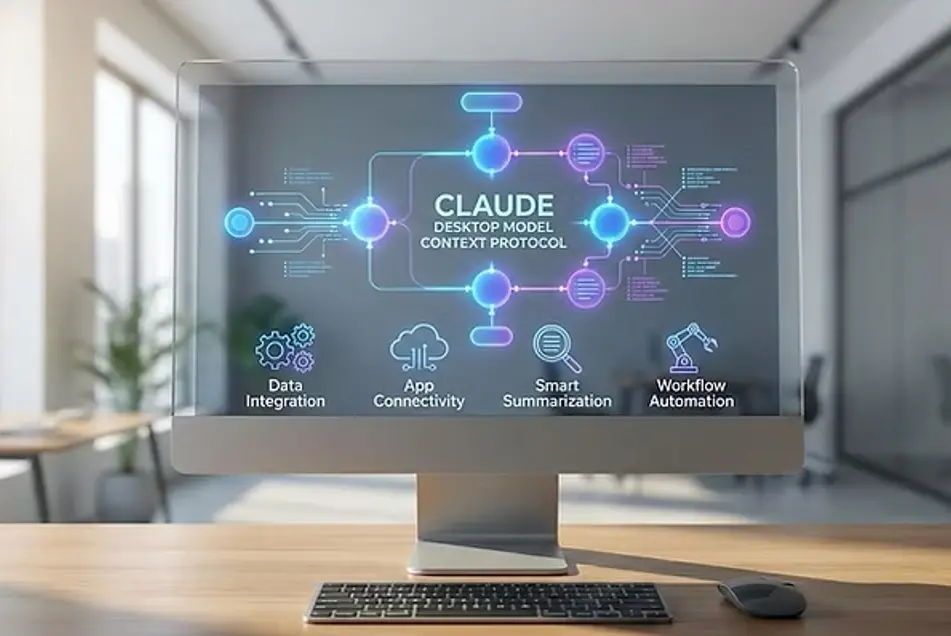

The latest evolution of AI assistants—particularly tools developed by Anthropic under the Claude ecosystem—introduces agentic coding systems capable of interacting directly with your computer environment. In practical terms, this means Claude Code can execute tasks on your behalf, such as editing files, running code, navigating interfaces, and automating workflows—rather than simply generating text-based suggestions.

Unlike traditional AI copilots, which require manual execution of outputs, Claude Code operates as a semi-autonomous software agent. It interprets goals, plans actions, interacts with your operating system or development environment, and completes multi-step tasks with minimal human intervention.

Table of Contents

Understanding Claude Code: Definition and Core Function

Claude Code refers to an advanced AI coding agent designed to:

- Interpret natural language instructions

- Access local or cloud-based development environments

- Execute commands (e.g., file operations, terminal commands)

- Iterate based on feedback or errors

Simple Definition (Featured Snippet Optimized)

Claude Code is an AI-powered autonomous coding agent that can execute programming and system-level tasks directly on a user’s computer environment, reducing the need for manual intervention.

How Claude Code Works (Mechanism Explained)

Claude Code operates through a combination of:

1. Natural Language Understanding (NLU)

Users provide high-level instructions such as:

- “Fix all broken imports in this project”

- “Deploy this app to a staging server”

The system translates intent into executable steps.

2. Task Planning Engine

The AI breaks down instructions into smaller actions:

- Identify relevant files

- Analyze dependencies

- Generate code patches

- Validate outputs

3. Environment Interaction Layer

This is the key innovation. Claude Code can:

- Open and edit files

- Run terminal commands

- Install dependencies

- Execute scripts

4. Iterative Feedback Loop

If errors occur:

- The system detects failures

- Diagnoses issues

- Applies corrections

This creates a closed-loop autonomous workflow, similar to how a human developer debugs code.

Real-World Use Cases (Professional Applications)

1. Automated Code Refactoring

A developer managing a large codebase can instruct:

“Refactor this repository to follow modern TypeScript standards.”

Claude Code will:

- Scan all files

- Update syntax

- Fix type definitions

- Ensure compatibility

2. Debugging and Error Resolution

Instead of manually tracing bugs:

- The AI identifies stack traces

- Locates root causes

- Applies fixes automatically

3. DevOps Automation

Tasks such as:

- CI/CD setup

- Docker configuration

- Cloud deployment

can be executed end-to-end without switching tools.

4. Data Pipeline Management

Claude Code can:

- Clean datasets

- Modify scripts

- Schedule processing jobs

5. Non-Developer Productivity

Even non-engineers can:

- Automate Excel workflows

- Organize files

- Generate reports

Key Advantages Over Traditional AI Assistants

| Feature | Traditional AI Tools | Claude Code |

|---|---|---|

| Output Type | Suggestions only | Direct execution |

| Workflow | Manual | Automated |

| Error Handling | User-dependent | Self-correcting |

| Task Scope | Single-step | Multi-step |

| System Access | Limited | Integrated |

Risks and Limitations (Critical Evaluation)

While powerful, this technology introduces important risks that must be understood.

1. Security Risks

Allowing AI to control your system can expose:

- Sensitive files

- API keys

- Internal codebases

Mitigation:

- Sandbox environments

- Permission-based execution

- Activity logging

2. Error Amplification

Autonomous systems can:

- Misinterpret instructions

- Apply incorrect fixes at scale

Example:

A faulty refactor could break an entire application.

3. Over-Automation Risk

Users may:

- Lose visibility into processes

- Become overly dependent on AI

4. Compliance and Governance

Industries like healthcare and finance must ensure:

- Data privacy (HIPAA, GDPR)

- Audit trails

- Human oversight

Safety Architecture (How Claude Code Stays Controlled)

Modern implementations include:

Permission Layers

- Read-only vs write access

- Command execution restrictions

Human-in-the-Loop Systems

Users approve:

- Critical actions

- Deployment steps

Sandboxing

Tasks are executed in:

- Isolated environments

- Virtual machines

Logging and Traceability

Every action is recorded for:

- Debugging

- Compliance

Step-by-Step Example: How Claude Code Completes a Task

Scenario: Fixing a Broken Web Application

User Instruction:

“Fix errors and make this React app run locally.”

AI Workflow:

- Scan project files

- Identify dependency issues

- Update package.json

- Install missing libraries

- Fix syntax errors

- Run development server

- Verify successful execution

Outcome:

A fully functioning app—without manual debugging.

Comparison with Other AI Agents (2026 Landscape)

Claude Code belongs to a broader category of agentic AI systems, alongside:

- Autonomous IDE assistants

- AI DevOps agents

- Workflow automation bots

However, Claude Code stands out due to:

- Strong reasoning capabilities

- Safer execution design

- Lower hallucination rates compared to earlier models

Practical Recommendations for Users

For Developers

- Start in sandbox environments

- Use version control (Git) before automation

- Review AI-generated changes

For Businesses

- Implement governance policies

- Restrict access to sensitive systems

- Train teams on AI oversight

For Beginners

- Use Claude Code for:

- Learning programming

- Automating repetitive tasks

- Avoid high-risk system operations initially

Future Outlook (2026 and Beyond)

Claude Code represents a shift toward:

1. Fully Autonomous Software Development

AI agents may:

- Build applications from scratch

- Maintain systems continuously

2. Human-AI Collaboration Models

Developers transition from:

- Writing code → Supervising AI

3. Cross-System Integration

AI will manage:

- Local machines

- Cloud infrastructure

- Enterprise systems

Key Takeaways

- Claude Code is an autonomous AI agent capable of executing real tasks on your computer.

- It transforms AI from a passive assistant into an active operator.

- While highly efficient, it requires careful oversight, security controls, and responsible use.

- It represents a major step toward AI-driven automation across software development and beyond.

Final Insight

Claude Code is not just another AI tool—it is part of a fundamental transition toward agentic computing, where software no longer waits for commands but actively completes objectives. The key challenge for users and organizations is not whether to adopt it, but how to use it responsibly and effectively in real-world environments.

FAQs

What is Claude Code computer automation?

Claude Code computer automation is an AI-driven system that allows an autonomous coding assistant to execute tasks directly on your computer. It combines AI agent computer control with workflow automation to perform coding, debugging, and system operations without manual execution.

How does Claude Code differ from traditional AI tools?

Unlike traditional AI tools that only generate suggestions, Claude Code computer automation uses AI workflow automation to execute real actions. It acts as an autonomous coding assistant capable of interacting with files, terminals, and applications in real time.

Is Claude Code computer automation safe to use?

Claude Code computer automation can be safe when using sandbox environments, permission controls, and monitoring systems. AI agent computer control should always include human oversight to prevent errors or unauthorized access to sensitive data.

What are the main benefits of Claude Code computer automation?

The key benefits include faster task execution, reduced manual coding, and scalable AI workflow automation. As an autonomous coding assistant, it improves productivity while minimizing repetitive development and operational tasks.

Who should use Claude Code computer automation in 2026?

Developers, startups, and enterprises can benefit most from Claude Code computer automation. Anyone seeking AI agent computer control and efficient AI workflow automation can use it to streamline coding, deployment, and system management tasks.